Ec2 Instance Terminated but Starts by Itself Again What May Be the Reason

For the 7th straight year, Gartner placed Amazon Web Services in the "Leaders" quadrant. Also Forbes reported, AWS Certified Solutions Builder Leads the fifteen Top Paying IT Certifications. Undoubtedly, the AWS Solution Architect position is one of the near sought afterward amongst IT jobs. You, likewise, can maximize the Cloud computing career opportunities that are sure to come your way by taking AWS Certification preparation with Edureka.

Why AWS Builder Interview Questions?

The AWS Solution Builder Part: With regards to AWS, a Solution Builder would design and define AWS architecture for existing systems, migrating them to cloud architectures as well as developing technical road-maps for future AWS deject implementations. So, through this AWS Architect interview questions article, I will bring you top and frequently asked AWS interview questions. Gain proficiency in designing, planning, and scaling cloud implementation with the AWS Masters Program.

The post-obit is the outline of this article:

- Section 1: What is Cloud Computing?

- Department ii: Amazon EC2 Interview Questions

- Section iii: Amazon Storage

- Section four: AWS VPC

- Section 5: Amazon Database

- Section vi: AWS Motorcar Scaling, AWS Load Balancer

- Section 7: CloudTrail, Road 53

- Section 8: AWS SQS, AWS SNS, AWS SES, AWS ElasticBeanstalk

- Section 9: AWS OpsWorks, AWS KMS

Now in every section, nosotros will start withaws basic interview questions, and and then move towards AWS interview questions and answers for experienced people which are more technically challenging,

AWS Interview Questions And Answers 2022 | AWS Solution Architect Training | Edureka

In this Edureka AWS Interview Questions video, you volition go to know the questions which you may confront in the interview, the concepts explained here are essential for any Solution Architect in the making.

Section 1: What is Cloud Computing? Can you talk about and compare any two pop Cloud Service Providers?

For a detailed discussion on this topic, please refer our Cloud Computing blog. Following is the comparison between two of the most popular Deject Service Providers:

Amazon Web Services Vs Microsoft Azure

| Parameters | AWS | Azure |

| Initiation | 2006 | 2010 |

| Market place Share | 4x | x |

| Implementation | Less Options | More Experimentation Possible |

| Features | Widest Range Of Options | Good Range Of Options |

| App Hosting | AWS not equally good every bit Azure | Azure Is Better |

| Development | Varied & Swell Features | Varied & Swell Features |

| IaaS Offerings | Skillful Market place Concur | Amend Offerings than AWS |

1. Endeavour this AWS scenario based interview question. I have some private servers on my premises, also I have distributed some of my workload on the public cloud, what is this architecture called?

- Virtual Private Network

- Individual Deject

- Virtual Individual Deject

- Hybrid Cloud

Answer D.

Caption: This type of compages would be a hybrid cloud. Why? Considering nosotros are using both, the public deject, and your on bounds servers i.due east the individual cloud. To make this hybrid architecture easy to use, wouldn't it be better if your private and public cloud were all on the aforementioned network(nearly). This is established by including your public cloud servers in a virtual private cloud, and connecting this virtual cloud with your on premise servers using a VPN(Virtual Private Network).

Acquire to design, develop, and manage a robust, secure, and highly available cloud-based solution for your organization'southward needs with the Google Cloud Platform Course.

Department ii: Amazon EC2 Interview Questions

For a detailed discussion on this topic, please refer our EC2 AWS weblog.

ii. What does the following control exercise with respect to the Amazon EC2 security groups?

ec2-create-group CreateSecurityGroup

- Groups the user created security groups into a new group for easy admission.

- Creates a new security group for use with your account.

- Creates a new group inside the security group.

- Creates a new rule inside the security grouping .

Answer B.

Explanation:A Security grouping is just like a firewall, it controls the traffic in and out of your instance. In AWS terms, the inbound and outbound traffic. The command mentioned is pretty straight forrard, it says create security grouping, and does the aforementioned. Moving along, once your security group is created, you can add together unlike rules in it. For example, yous take an RDS case, to access information technology, you have to add together the public IP address of the machine from which yous want access the instance in its security group.

3. Hither is aws scenario based interview question.Y'all take a video trans-coding application. The videos are processed according to a queue. If the processing of a video is interrupted in one instance, it is resumed in some other instance. Currently there is a huge back-log of videos which needs to exist candy, for this you lot need to add more instances, but you need these instances simply until your excess is reduced. Which of these would be an efficient way to do it?

Y'all should be using an On Need instance for the same. Why? Starting time of all, the workload has to be candy now, meaning it is urgent, secondly you don't need them in one case your backlog is cleared, therefore Reserved Case is out of the motion picture, and since the piece of work is urgent, you cannot finish the work on your instance only considering the spot toll spiked, therefore Spot Instances shall also not be used. Hence On-Demand instances shall be the correct selection in this case.

iv. Y'all accept a distributed application that periodically processes big volumes of data across multiple Amazon EC2 Instances. The application is designed to recover gracefully from Amazon EC2 example failures. Y'all are required to accomplish this task in the most cost effective fashion.

Which of the following volition meet your requirements?

- Spot Instances

- Reserved instances

- Dedicated instances

- On-Demand instances

Answer: A

Explanation: Since the piece of work nosotros are addressing here is not continuous, a reserved case shall exist idle at times, same goes with On Demand instances. Also information technology does non brand sense to launch an On Demand instance whenever work comes upward, since it is expensive. Hence Spot Instances will be the right fit because of their low rates and no long term commitments.

Cheque out our AWS Certification Training in Top Cities

| India | United States | Other Countries |

| AWS Training in Hyderabad | AWS Training in Atlanta | AWS Training in London |

| AWS Training in Bangalore | AWS Training in Boston | AWS Training in Adelaide |

| AWS Grooming in Chennai | AWS Grooming in NYC | AWS Training in Singapore |

5. How is stopping and terminating an instance dissimilar from each other?

Starting, stopping and terminating are the three states in an EC2 instance, allow'due south discuss them in particular:

- Stopping and Starting an instance: When an instance is stopped, the case performs a normal shutdown and then transitions to a stopped state. All of its Amazon EBS volumes remain attached, and you can showtime the instance again at a subsequently fourth dimension. You lot are not charged for additional instance hours while the instance is in a stopped country.

- Terminating an instance: When an instance is terminated, the example performs a normal shutdown, then the attached Amazon EBS volumes are deleted unless the volume'southward deleteOnTermination aspect is set to false. The instance itself is also deleted, and you tin't start the instance again at a later time.

6. If I want my instance to run on a unmarried-tenant hardware, which value practice I have to set the instance'southward tenancy aspect to?

- Dedicated

- Isolated

- One

- Reserved

Respond A.

Explanation: The Example tenancy attribute should exist ready to Dedicated Instance. The rest of the values are invalid.

7. When will y'all incur costs with an Elastic IP address (EIP)?

- When an EIP is allocated.

- When it is allocated and associated with a running case.

- When it is allocated and associated with a stopped instance.

- Costs are incurred regardless of whether the EIP is associated with a running example.

Reply C.

Caption: You are not charged, if simply one Elastic IP accost is attached with your running instance. But you practice get charged in the following conditions:

- When y'all use more than 1 Rubberband IPs with your instance.

- When your Rubberband IP is attached to a stopped instance.

- When your Elastic IP is not attached to any case.

viii. How is a Spot instance unlike from an On-Demand case or Reserved Instance?

First of all, let's understand that Spot Instance, On-Demand instance and Reserved Instances are all models for pricing. Moving along, spot instances provide the ability for customers to purchase compute chapters with no upfront commitment, at hourly rates ordinarily lower than the On-Need rate in each region. Spot instances are only like bidding, the bidding price is called Spot Price. The Spot Toll fluctuates based on supply and demand for instances, just customers will never pay more than the maximum cost they accept specified. If the Spot Price moves college than a customer's maximum price, the client'south EC2 instance will be shut down automatically. But the reverse is non true, if the Spot prices come down again, your EC2 case volition non be launched automatically, one has to do that manually. In Spot and On demand instance, there is no delivery for the duration from the user side, yet in reserved instances one has to stick to the time menstruation that he has called.

nine. Are the Reserved Instances bachelor for Multi-AZ Deployments?

- Multi-AZ Deployments are only available for Cluster Compute instances types

- Available for all instance types

- Only bachelor for M3 instance types

- D. Not Bachelor for Reserved Instances

Reply B.

Explanation: Reserved Instances is a pricing model, which is available for all instance types in EC2.

x. How to use the processor land control feature bachelor on the c4.8xlarge instance?

The processor state control consists of two states:

- The C state – Sleep state varying from c0 to c6. C6 being the deepest sleep country for a processor

- The P land – Functioning state p0 being the highest and p15 being the lowest possible frequency.

Now, why the C state and P country. Processors have cores, these cores demand thermal headroom to boost their performance. Now since all the cores are on the processor the temperature should be kept at an optimal state so that all the cores can perform at the highest performance.

Now how will these states help in that? If a cadre is put into sleep state it will reduce the overall temperature of the processor and hence other cores can perform improve. Now the same can exist synchronized with other cores, then that the processor tin boost as many cores it can by timely putting other cores to slumber, and thus get an overall performance heave.

Concluding, the C and P state can be customized in some EC2 instances similar the c4.8xlarge case and thus you can customize the processor according to your workload.

11. What kind of network performance parameters can you look when you launch instances in cluster placement group?

The network performance depends on the instance blazon and network performance specification, if launched in a placement group you can expect up to

- 10 Gbps in a unmarried-flow,

- 20 Gbps in multiflow i.e full duplex

- Network traffic outside the placement group will be express to 5 Gbps(full duplex).

12. To deploy a 4 node cluster of Hadoop in AWS which example type tin exist used?

Kickoff let's sympathise what actually happens in a Hadoop cluster, the Hadoop cluster follows a principal slave concept. The master machine processes all the data, slave machines store the information and human activity as data nodes. Since all the storage happens at the slave, a college chapters hard deejay would exist recommended and since master does all the processing, a higher RAM and a much amend CPU is required. Therefore, you can select the configuration of your machine depending on your workload. For e.yard. – In this example c4.8xlarge will be preferred for principal car whereas for slave machine we can select i2.large case. If you don't desire to deal with configuring your case and installing hadoop cluster manually, you can straight away launch an Amazon EMR (Elastic Map Reduce) instance which automatically configures the servers for y'all. You lot dump your data to exist processed in S3, EMR picks it from there, processes it, and dumps it dorsum into S3.

13. Where practice you think an AMI fits, when you are designing an compages for a solution?

AMIs(Amazon Machine Images) are like templates of virtual machines and an case is derived from an AMI. AWS offers pre-baked AMIs which you can choose while you are launching an example, some AMIs are not costless, therefore can exist bought from the AWS Market place. You lot tin also choose to create your own custom AMI which would assist you save infinite on AWS. For case if yous don't need a set of software on your installation, you can customize your AMI to do that. This makes it cost efficient, since you lot are removing the unwanted things.

fourteen. How do you choose an Availability Zone?

Allow's empathize this through an example, consider in that location's a company which has user base of operations in Bharat also every bit in the The states.

Let the states encounter how we will choose the region for this use case :

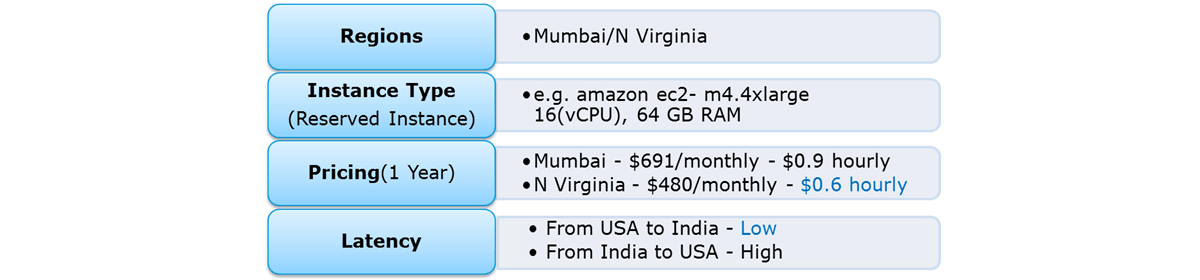

So, with reference to the higher up figure the regions to choose betwixt are, Bombay and North Virginia. Now let us beginning compare the pricing, you have hourly prices, which can exist converted to your per month figure. Here North Virginia emerges as a winner. Simply, pricing cannot be the only parameter to consider. Performance should as well be kept in heed hence, permit's await at latency also. Latency basically is the time that a server takes to answer to your requests i.e the response time. Due north Virginia wins again!

So, with reference to the higher up figure the regions to choose betwixt are, Bombay and North Virginia. Now let us beginning compare the pricing, you have hourly prices, which can exist converted to your per month figure. Here North Virginia emerges as a winner. Simply, pricing cannot be the only parameter to consider. Performance should as well be kept in heed hence, permit's await at latency also. Latency basically is the time that a server takes to answer to your requests i.e the response time. Due north Virginia wins again!

So concluding, N Virginia should be chosen for this use example.

xv. Is one Elastic IP address plenty for every example that I have running?

Depends! Every instance comes with its ain private and public address. The individual address is associated exclusively with the case and is returned to Amazon EC2 only when it is stopped or terminated. Similarly, the public address is associated exclusively with the case until it is stopped or terminated. Nonetheless, this can exist replaced by the Elastic IP address, which stays with the instance every bit long as the user doesn't manually detach it. But what if you are hosting multiple websites on your EC2 server, in that case you may require more than than i Rubberband IP address.

16. What are the best practices for Security in Amazon EC2?

At that place are several best practices to secure Amazon EC2. A few of them are given beneath:

- Use AWS Identity and Access Management (IAM) to control access to your AWS resources.

- Restrict access past only assuasive trusted hosts or networks to admission ports on your example.

- Review the rules in your security groups regularly, and ensure that you apply the principle of least

- Privilege – but open up permissions that you require.

- Disable password-based logins for instances launched from your AMI. Passwords can be found or cracked, and are a security risk.

Section 3: Amazon Storage

17. Another scenario based interview question. You demand to configure an Amazon S3 bucket to serve static assets for your public-facing web application. Which method will ensure that all objects uploaded to the saucepan are prepare to public read?

- Prepare permissions on the object to public read during upload.

- Configure the bucket policy to set all objects to public read.

- Utilize AWS Identity and Access Management roles to fix the bucket to public read.

- Amazon S3 objects default to public read, so no activeness is needed.

Answer B.

Explanation: Rather than making changes to every object, its meliorate to set the policy for the whole bucket. IAM is used to give more granular permissions, since this is a website, all objects would be public by default.

18. A client wants to leverage Amazon Unproblematic Storage Service (S3) and Amazon Glacier equally role of their fill-in and archive infrastructure. The client plans to employ 3rd-party software to support this integration. Which approach will limit the access of the 3rd party software to only the Amazon S3 bucket named "company-backup"?

- A custom bucket policy limited to the Amazon S3 API in three Amazon Glacier archive "visitor-backup"

- A custom saucepan policy limited to the Amazon S3 API in "visitor-backup"

- A custom IAM user policy limited to the Amazon S3 API for the Amazon Glacier archive "company-backup".

- A custom IAM user policy limited to the Amazon S3 API in "company-fill-in".

Answer D.

Explanation: Taking queue from the previous questions, this utilise case involves more granular permissions, hence IAM would be used here.

19. Can S3 be used with EC2 instances, if yes, how?

Yes, it tin be used for instances with root devices backed by local instance storage. By using Amazon S3, developers accept access to the same highly scalable, reliable, fast, cheap information storage infrastructure that Amazon uses to run its own global network of web sites. In society to execute systems in the Amazon EC2 environment, developers use the tools provided to load their Amazon Machine Images (AMIs) into Amazon S3 and to motility them between Amazon S3 and Amazon EC2.

Another utilise case could be for websites hosted on EC2 to load their static content from S3.

For a detailed word on S3, please refer our S3 AWS weblog.

twenty. A customer implemented AWS Storage Gateway with a gateway-buried volume at their main part. An issue takes the link between the main and branch office offline. Which methods will enable the branch office to admission their data?

- Restore by implementing a lifecycle policy on the Amazon S3 bucket.

- Make an Amazon Glacier Restore API phone call to load the files into another Amazon S3 saucepan within four to six hours.

- Launch a new AWS Storage Gateway instance AMI in Amazon EC2, and restore from a gateway snapshot.

- Create an Amazon EBS volume from a gateway snapshot, and mount it to an Amazon EC2 instance.

Answer C.

Explanation: The fastest way to practice it would exist launching a new storage gateway case. Why? Since fourth dimension is the cardinal factor which drives every business organisation, troubleshooting this problem volition have more time. Rather than we can just restore the previous working state of the storage gateway on a new instance.

21. When you need to move information over long distances using the internet, for instance beyond countries or continents to your Amazon S3 bucket, which method or service will you use?

- Amazon Glacier

- Amazon CloudFront

- Amazon Transfer Acceleration

- Amazon Snowball

Answer C.

Caption: Y'all would not employ Snowball, because for now, the snowball service does not back up cross region data transfer, and since, nosotros are transferring across countries, Snowball cannot exist used. Transfer Acceleration shall exist the right choice here as it throttles your information transfer with the employ of optimized network paths and Amazon's content delivery network upto 300% compared to normal data transfer speed.

22. How can you speed up data transfer in Snowball?

The data transfer tin can exist increased in the following way:

- By performing multiple copy operations at once i.e. if the workstation is powerful enough, yous can initiate multiple cp commands each from different terminals, on the aforementioned Snowball device.

- Copying from multiple workstations to the aforementioned snowball.

- Transferring large files or by creating a batch of small file, this will reduce the encryption overhead.

- Eliminating unnecessary hops i.due east. make a setup where the source machine(s) and the snowball are the only machines agile on the switch being used, this tin hugely ameliorate functioning.

Section 4: AWS VPC

23. If you desire to launch Amazon Elastic Compute Cloud (EC2) instances and assign each case a predetermined private IP address you should:

- Launch the instance from a private Amazon Motorcar Prototype (AMI).

- Assign a grouping of sequential Elastic IP address to the instances.

- Launch the instances in the Amazon Virtual Private Cloud (VPC).

- Launch the instances in a Placement Group.

Answer C.

Explanation: The best manner of connecting to your cloud resources (for ex- ec2 instances) from your ain data heart (for eg- individual cloud) is a VPC. Once you connect your datacenter to the VPC in which your instances are present, each instance is assigned a individual IP address which can be accessed from your datacenter. Hence, you can admission your public deject resources, as if they were on your own network.

24. Can I connect my corporate datacenter to the Amazon Cloud?

Yes, y'all can do this past establishing a VPN(Virtual Private Network) connexion between your company's network and your VPC (Virtual Private Cloud), this will allow you lot to interact with your EC2 instances every bit if they were within your existing network.

25. Is it possible to change the private IP addresses of an EC2 while it is running/stopped in a VPC?

Principal individual IP address is attached with the case throughout its lifetime and cannot be changed, withal secondary private addresses can be unassigned, assigned or moved between interfaces or instances at any point.

26. Why do you make subnets?

- Considering there is a shortage of networks

- To efficiently use networks that accept a big no. of hosts.

- Because at that place is a shortage of hosts.

- To efficiently use networks that have a small-scale no. of hosts.

Answer B.

Caption: If there is a network which has a large no. of hosts, managing all these hosts can be a slow job. Therefore we separate this network into subnets (sub-networks) so that managing these hosts becomes simpler.

27. Which of the following is true?

- You lot can attach multiple route tables to a subnet

- You lot can attach multiple subnets to a route table

- Both A and B

- None of these.

Reply B.

Explanation: Route Tables are used to route network packets, therefore in a subnet having multiple road tables will lead to confusion equally to where the packet has to go. Therefore, at that place is merely one route table in a subnet, and since a road table can accept any no. of records or information, hence attaching multiple subnets to a route tabular array is possible.

28. In CloudFront what happens when content is NOT present at an Border location and a request is made to it?

- An Mistake "404 not establish" is returned

- CloudFront delivers the content directly from the origin server and stores information technology in the cache of the edge location

- The asking is kept on concur till content is delivered to the edge location

- The request is routed to the next closest edge location

Answer B.

Explanation: CloudFront is a content delivery system, which caches data to the nearest edge location from the user, to reduce laten cy. If data is not nowadays at an edge location, the first time the information may get transferred from the original server, but from the next fourth dimension, it volition be served from the cached edge.

29. If I'm using Amazon CloudFront, can I employ Direct Connect to transfer objects from my ain data center?

Yeah. Amazon CloudFront supports custom origins including origins from exterior of AWS. With AWS Direct Connect, y'all volition exist charged with the corresponding data transfer rates .

xxx. If my AWS Direct Connect fails, volition I lose my connectivity?

If a backup AWS Direct connect has been configured, in the event of a failure information technology will switch over to the 2d one. It is recommended to enable Bidirectional Forwarding Detection (BFD) when configuring your connections to ensure faster detection and failover. On the other mitt, if you have configured a backup IPsec VPN connection instead, all VPC traffic will failover to the backup VPN connection automatically. Traffic to/from public resource such as Amazon S3 will be routed over the Cyberspace. If you do non have a backup AWS Direct Connect link or a IPsec VPN link, then Amazon VPC traffic will be dropped in the event of a failure.

Department 5: Amazon Database

31. If I launch a standby RDS instance, volition it be in the same Availability Zone equally my primary?

- Only for Oracle RDS types

- Yes

- Just if information technology is configured at launch

- No

Answer D.

Caption: No, since the purpose of having a standby case is to avoid an infrastructure failure (if it happens), therefore the standby instance is stored in a unlike availability zone, which is a physically different independent infrastructure.

32. When would I prefer Provisioned IOPS over Standard RDS storage?

- If you accept batch-oriented workloads

- If you utilize production online transaction processing (OLTP) workloads.

- If you have workloads that are not sensitive to consistent performance

- All of the higher up

Answer A.

Caption: Provisioned IOPS deliver loftier IO rates merely on the other manus it is expensive as well. Batch processing workloads do non crave manual intervention they enable full utilization of systems, therefore a provisioned IOPS will be preferred for batch oriented workload.

33. How is Amazon RDS, DynamoDB and Redshift different?

- Amazon RDS is a database management service for relational databases, it manages patching, upgrading, backing up of data etc. of databases for y'all without your intervention. RDS is a Db management service for structured data only.

- DynamoDB, on the other hand, is a NoSQL database service, NoSQL deals with unstructured data.

- Redshift, is an entirely unlike service, information technology is a information warehouse product and is used in data analysis.

34. If I am running my DB Case as a Multi-AZ deployment, can I use the standby DB Instance for read or write operations along with master DB instance?

- Yes

- Only with MySQL based RDS

- Only for Oracle RDS instances

- No

Respond D.

Explanation: No, Standby DB instance cannot be used with main DB instance in parallel, as the former is solely used for standby purposes, information technology cannot be used unless the main instance goes downward.

35. Your company's branch offices are all over the world, they use a software with a multi-regional deployment on AWS, they use MySQL 5.six for data persistence.

The task is to run an hourly batch procedure and read data from every region to compute cross-regional reports which will be distributed to all the branches. This should be done in the shortest time possible. How will you build the DB compages in guild to meet the requirements?

- For each regional deployment, utilize RDS MySQL with a master in the region and a read replica in the HQ region

- For each regional deployment, employ MySQL on EC2 with a chief in the region and transport hourly EBS snapshots to the HQ region

- For each regional deployment, use RDS MySQL with a master in the region and transport hourly RDS snapshots to the HQ region

- For each regional deployment, utilize MySQL on EC2 with a master in the region and use S3 to copy information files hourly to the HQ region

Respond A.

Explanation: For this we volition take an RDS instance as a main, because it will manage our database for u.s.a. and since we have to read from every region, we'll put a read replica of this instance in every region where the information has to be read from. Option C is non correct since putting a read replica would exist more efficient than putting a snapshot, a read replica can be promoted if needed to an independent DB instance, just with a Db snapshot it becomes mandatory to launch a separate DB Case.

36. Can I run more than one DB instance for Amazon RDS for free?

Yes. Y'all tin can run more ane Single-AZ Micro database instance, that too for free! However, whatever utilize exceeding 750 case hours, across all Amazon RDS Single-AZ Micro DB instances, beyond all eligible database engines and regions, will exist billed at standard Amazon RDS prices. For case: if you run two Unmarried-AZ Micro DB instances for 400 hours each in a single month, yous will accrue 800 example hours of usage, of which 750 hours will be complimentary. You will exist billed for the remaining 50 hours at the standard Amazon RDS price.

For a detailed discussion on this topic, delight refer our RDS AWS web log.

37. Which AWS services will y'all use to collect and process e-commerce data for near real-time analysis?

- Amazon ElastiCache

- Amazon DynamoDB

- Amazon Redshift

- Amazon Elastic MapReduce

Answer B,C.

Explanation: DynamoDB is a fully managed NoSQL database service. DynamoDB, therefore can exist fed any type of unstructured data, which can be data from e-commerce websites besides, and afterwards, an analysis tin can be done on them using Amazon Redshift. We are not using Elastic MapReduce, since a near real time analyses is needed.

38. Can I retrieve only a specific element of the data, if I have a nested JSON data in DynamoDB?

Aye. When using the GetItem, BatchGetItem, Query or Scan APIs, y'all tin ascertain a Projection Expression to determine which attributes should exist retrieved from the table. Those attributes tin include scalars, sets, or elements of a JSON document.

39. A company is deploying a new ii-tier web application in AWS. The company has limited staff and requires high availability, and the application requires complex queries and table joins. Which configuration provides the solution for the company's requirements?

- MySQL Installed on 2 Amazon EC2 Instances in a unmarried Availability Zone

- Amazon RDS for MySQL with Multi-AZ

- Amazon ElastiCache

- Amazon DynamoDB

Answer D.

Explanation: DynamoDB has the power to scale more than RDS or any other relational database service, therefore DynamoDB would be the apt choice.

twoscore. What happens to my backups and DB Snapshots if I delete my DB Case?

When you delete a DB case, yous have an choice of creating a last DB snapshot, if you do that you lot can restore your database from that snapshot. RDS retains this user-created DB snapshot along with all other manually created DB snapshots after the instance is deleted , also automated backups are deleted and only manually created DB Snapshots are retained.

41. Which of the post-obit use cases are suitable for Amazon DynamoDB? Choose two answers

- Managing web sessions.

- Storing JSON documents.

- Storing metadata for Amazon S3 objects.

- Running relational joins and complex updates.

Respond C,D.

Explanation: If all your JSON data have the same fields eg [id,name,historic period] then it would exist better to store information technology in a relational database, the metadata on the other hand is unstructured, also running relational joins or complex updates would work on DynamoDB as well.

42. How can I load my data to Amazon Redshift from dissimilar information sources like Amazon RDS, Amazon DynamoDB and Amazon EC2?

You can load the data in the following two ways:

- You can utilise the Copy command to load data in parallel straight to Amazon Redshift from Amazon EMR, Amazon DynamoDB, or whatsoever SSH-enabled host.

- AWS Information Pipeline provides a high operation, reliable, mistake tolerant solution to load data from a variety of AWS data sources. Y'all can use AWS Data Pipeline to specify the data source, desired data transformations, and and so execute a pre-written import script to load your data into Amazon Redshift.

43. Your application has to call up data from your user's mobile every 5 minutes and the data is stored in DynamoDB, later every day at a particular fourth dimension the data is extracted into S3 on a per user basis and then your application is later used to visualize the information to the user. Y'all are asked to optimize the architecture of the backend arrangement to lower price, what would you recommend?

- Create a new Amazon DynamoDB (able each 24-hour interval and drib the 1 for the previous twenty-four hours subsequently its data is on Amazon S3.

- Introduce an Amazon SQS queue to buffer writes to the Amazon DynamoDB table and reduce provisioned write throughput.

- Introduce Amazon Elasticache to cache reads from the Amazon DynamoDB table and reduce provisioned read throughput.

- Write data direct into an Amazon Redshift cluster replacing both Amazon DynamoDB and Amazon S3.

Answer C.

Caption: Since our piece of work requires the information to be extracted and analyzed, to optimize this process a person would use provisioned IO, simply since it is expensive, using a ElastiCache memoryinsread to cache the results in the retentivity can reduce the provisioned read throughput and hence reduce price without affecting the functioning.

44. You lot are running a website on EC2 instances deployed across multiple Availability Zones with a Multi-AZ RDS MySQL Extra Large DB Case. The site performs a high number of pocket-size reads and writes per second and relies on an eventual consistency model. After comprehensive tests you observe that there is read contention on RDS MySQL. Which are the best approaches to meet these requirements? (Choose ii answers)

- Deploy ElastiCache in-memory cache running in each availability zone

- Implement sharding to distribute load to multiple RDS MySQL instances

- Increase the RDS MySQL Instance size and Implement provisioned IOPS

- Add an RDS MySQL read replica in each availability zone

Answer A,C.

Explanation: Since it does a lot of read writes, provisioned IO may become expensive. Just we need high functioning as well, therefore the data can be cached using ElastiCache which tin be used for frequently reading the data. As for RDS since read contention is happening, the case size should be increased and provisioned IO should be introduced to increase the performance.

45. A startup is running a pilot deployment of around 100 sensors to measure street racket and air quality in urban areas for 3 months. It was noted that every calendar month around 4GB of sensor data is generated. The company uses a load balanced auto scaled layer of EC2 instances and a RDS database with 500 GB standard storage. The airplane pilot was a success and at present they want to deploy at least 100K sensors which need to be supported by the backend. You lot need to store the information for at least 2 years to analyze it. Which setup of the post-obit would y'all adopt?

- Add an SQS queue to the ingestion layer to buffer writes to the RDS instance

- Ingest data into a DynamoDB tabular array and movement old data to a Redshift cluster

- Replace the RDS instance with a 6 node Redshift cluster with 96TB of storage

- Keep the current architecture just upgrade RDS storage to 3TB and 10K provisioned IOPS

Respond C.

Explanation: A Redshift cluster would be preferred considering it easy to calibration, besides the piece of work would be done in parallel through the nodes, therefore is perfect for a bigger workload like our use case. Since each month four GB of information is generated, therefore in 2 year, it should be around 96 GB. And since the servers volition be increased to 100K in number, 96 GB will approximately become 96TB. Hence option C is the right answer.

Section 6: AWS Automobile Scaling, AWS Load Balancer

46. Suppose you lot have an awarding where you accept to render images and likewise practice some general computing. From the following services which service volition best fit your need?

- Classic Load Balancer

- Awarding Load Balancer

- Both of them

- None of these

Answer B.

Explanation: You will choose an application load balancer, since information technology supports path based routing, which means it tin take decisions based on the URL, therefore if your task needs image rendering it will route it to a different instance, and for general computing it will route information technology to a different case.

47. What is the difference between Scalability and Elasticity?

Scalability is the ability of a arrangement to increase its hardware resources to handle the increase in demand. It tin can be done by increasing the hardware specifications or increasing the processing nodes.

Elasticity is the ability of a system to handle increase in the workload by adding additional hardware resources when the demand increases(same as scaling) merely likewise rolling dorsum the scaled resource, when the resources are no longer needed. This is particularly helpful in Cloud environments, where a pay per use model is followed.

48. How will you modify the instance type for instances which are running in your application tier and are using Auto Scaling. Where will you change information technology from the post-obit areas?

- Machine Scaling policy configuration

- Auto Scaling group

- Auto Scaling tags configuration

- Auto Scaling launch configuration

Respond D.

Caption: Motorcar scaling tags configuration, is used to adhere metadata to your instances, to change the case blazon you have to use auto scaling launch configuration.

49. You accept a content management system running on an Amazon EC2 instance that is approaching 100% CPU utilization. Which choice will reduce load on the Amazon EC2 instance?

- Create a load balancer, and annals the Amazon EC2 instance with information technology

- Create a CloudFront distribution, and configure the Amazon EC2 instance as the origin

- Create an Car Scaling group from the instance using the CreateAutoScalingGroup activeness

- Create a launch configuration from the instance using the CreateLaunchConfiguration Activity

Answer A.

Explanation: Creating alone an autoscaling group will not solve the result, until you attach a load balancer to it. Once you lot adhere a load balancer to an autoscaling grouping, it will efficiently distribute the load amongst all the instances. Option B – CloudFront is a CDN, it is a data transfer tool therefore will not help reduce load on the EC2 example. Similarly the other choice – Launch configuration is a template for configuration which has no connection with reducing loads.

50. When should I use a Classic Load Balancer and when should I utilise an Awarding load balancer?

A Classic Load Balancer is platonic for unproblematic load balancing of traffic across multiple EC2 instances, while an Application Load Balancer is platonic for microservices or container-based architectures where there is a need to route traffic to multiple services or load balance across multiple ports on the same EC2 instance.

For a detailed discussion on Auto Scaling and Load Balancer, delight refer our EC2 AWS blog.

51. What does Connection draining exercise?

- Terminates instances which are not in use.

- Re-routes traffic from instances which are to be updated or failed a health cheque.

- Re-routes traffic from instances which have more than workload to instances which take less workload.

- Drains all the connections from an instance, with one click.

Respond B.

Explanation: Connectedness draining is a service under ELB which constantly monitors the health of the instances. If any example fails a health check or if any instance has to be patched with a software update, it pulls all the traffic from that instance and re routes them to other instances.

52.When an instance is unhealthy, it is terminated and replaced with a new one, which of the following services does that?

- Glutinous Sessions

- Fault Tolerance

- Connectedness Draining

- Monitoring

Answer B.

Explanation: When ELB detects that an instance is unhealthy, it starts routing incoming traffic to other healthy instances in the region. If all the instances in a region becomes unhealthy, and if you take instances in some other availability zone/region, your traffic is directed to them. One time your instances become good for you over again, they are re routed back to the original instances.

53. What are lifecycle hooks used for in AutoScaling?

- They are used to do health checks on instances

- They are used to put an additional look time to a scale in or scale out upshot.

- They are used to shorten the await time to a calibration in or scale out effect

- None of these

Answer B.

Explanation: Lifecycle hooks are used for putting expect time before whatever lifecycle action i.e launching or terminating an case happens. The purpose of this expect fourth dimension, can be anything from extracting log files before terminating an instance or installing the necessary softwares in an instance before launching information technology.

54. A user has setup an Machine Scaling grouping. Due to some issue the group has failed to launch a single case for more than than 24 hours. What will happen to Auto Scaling in this status?

- Auto Scaling volition go along trying to launch the instance for 72 hours

- Auto Scaling will suspend the scaling process

- Auto Scaling will start an instance in a split region

- The Auto Scaling grouping will be terminated automatically

Answer B.

Explanation: Auto Scaling allows you to suspend and so resume one or more of the Auto Scaling processes in your Auto Scaling grouping. This can be very useful when y'all want to investigate a configuration trouble or other issue with your spider web application, and then make changes to your application, without triggering the Machine Scaling procedure.

Section 7: CloudTrail, Route 53

55. You have an EC2 Security Grouping with several running EC2 instances. Y'all changed the Security Group rules to allow inbound traffic on a new port and protocol, and so launched several new instances in the same Security Group. The new rules apply:

- Immediately to all instances in the security group.

- Immediately to the new instances but.

- Immediately to the new instances, just old instances must exist stopped and restarted earlier the new rules utilize.

- To all instances, but it may have several minutes for old instances to see the changes.

Answer A.

Caption: Any rule specified in an EC2 Security Group applies immediately to all the instances, irrespective of when they are launched earlier or after calculation a dominion.

56. To create a mirror image of your environment in another region for disaster recovery, which of the following AWS resources do not need to exist recreated in the 2d region? ( Choose ii answers )

- Route 53 Tape Sets

- Elastic IP Addresses (EIP)

- EC2 Central Pairs

- Launch configurations

- Security Groups

Respond A.

Explanation: Road 53 record sets are common assets therefore there is no need to replicate them, since Route 53 is valid across regions

57. A client wants to capture all client connexion information from his load balancer at an interval of 5 minutes, which of the following options should he cull for his application?

- Enable AWS CloudTrail for the loadbalancer.

- Enable access logs on the load balancer.

- Install the Amazon CloudWatch Logs agent on the load balancer.

- Enable Amazon CloudWatch metrics on the load balancer.

Answer A.

Caption: AWS CloudTrail provides inexpensive logging data for load balancer and other AWS resources This logging information can be used for analyses and other administrative piece of work, therefore is perfect for this use case.

58. A client wants to track access to their Amazon Simple Storage Service (S3) buckets and also use this information for their internal security and access audits. Which of the following will meet the Client requirement?

- Enable AWS CloudTrail to audit all Amazon S3 bucket access.

- Enable server admission logging for all required Amazon S3 buckets.

- Enable the Requester Pays selection to track access via AWS Billing

- Enable Amazon S3 event notifications for Put and Post.

Answer A.

Explanation: AWS CloudTrail has been designed for logging and tracking API calls. As well this service is bachelor for storage, therefore should be used in this use case.

59. Which of the following are true regarding AWS CloudTrail? (Choose 2 answers)

- CloudTrail is enabled globally

- CloudTrail is enabled on a per-region and service basis

- Logs can exist delivered to a single Amazon S3 bucket for assemblage.

- CloudTrail is enabled for all bachelor services within a region.

Answer B,C.

Caption: Cloudtrail is non enabled for all the services and is also not available for all the regions. Therefore pick B is correct, also the logs can be delivered to your S3 saucepan, hence C is also correct.

60. What happens if CloudTrail is turned on for my account but my Amazon S3 bucket is not configured with the right policy?

CloudTrail files are delivered according to S3 bucket policies. If the bucket is not configured or is misconfigured, CloudTrail might not be able to deliver the log files.

61. How practise I transfer my existing domain name registration to Amazon Road 53 without disrupting my existing web traffic?

You lot will need to go a list of the DNS tape data for your domain proper name first, information technology is generally bachelor in the grade of a "zone file" that you lot can get from your existing DNS provider. Once you receive the DNS record information, y'all can use Route 53's Management Panel or unproblematic web-services interface to create a hosted zone that volition shop your DNS records for your domain proper name and follow its transfer process. Information technology besides includes steps such every bit updating the nameservers for your domain name to the ones associated with your hosted zone. For completing the procedure you have to contact the registrar with whom you registered your domain proper noun and follow the transfer process. As soon as your registrar propagates the new name server delegations, your DNS queries will commencement to get answered.

Department 8: AWS SQS, AWS SNS, AWS SES, AWS ElasticBeanstalk

62. Which of the post-obit services y'all would not use to deploy an app?

- Elastic Beanstalk

- Lambda

- Opsworks

- CloudFormation

Reply B.

Explanation: Lambda is used for running server-less applications. It can be used to deploy functions triggered by events. When we say serverless, nosotros mean without you worrying well-nigh the computing resources running in the background. Information technology is not designed for creating applications which are publicly accessed.

63. How does Elastic Beanstalk use updates?

- By having a indistinguishable ready with updates earlier swapping.

- By updating on the case while it is running

- By taking the example down in the maintenance window

- Updates should be installed manually

Answer A.

Explanation: Elastic Beanstalk prepares a duplicate copy of the case, before updating the original instance, and routes your traffic to the duplicate instance, so that, incase your updated application fails, it volition switch back to the original instance, and at that place will exist no downtime experienced by the users who are using your application.

64. How is AWS Rubberband Beanstalk different than AWS OpsWorks?

AWS Elastic Beanstalk is an application management platform while OpsWorks is a configuration management platform. BeanStalk is an easy to use service which is used for deploying and scaling web applications developed with Java, .Net, PHP, Node.js, Python, Reddish, Go and Docker. Customers upload their code and Elastic Beanstalk automatically handles the deployment. The application volition be set up to use without any infrastructure or resources configuration.

In dissimilarity, AWS Opsworks is an integrated configuration management platform for IT administrators or DevOps engineers who want a high degree of customization and control over operations.

65. What happens if my awarding stops responding to requests in beanstalk?

AWS Beanstalk applications take a arrangement in place for avoiding failures in the underlying infrastructure. If an Amazon EC2 instance fails for any reason, Beanstalk will use Auto Scaling to automatically launch a new instance. Beanstalk tin besides discover if your application is not responding on the custom link, even though the infrastructure appears healthy, it will be logged equally an environmental effect( e.one thousand a bad version was deployed) so you can take an appropriate activeness.

For a detailed discussion on this topic, delight refer Lambda AWS weblog.

Department ix: AWS OpsWorks, AWS KMS

66. How is AWS OpsWorks unlike than AWS CloudFormation?

OpsWorks and CloudFormation both support application modelling, deployment, configuration, management and related activities. Both support a wide variety of architectural patterns, from uncomplicated web applications to highly complex applications. AWS OpsWorks and AWS CloudFormation differ in abstraction level and areas of focus.

AWS CloudFormation is a building block service which enables customer to manage about whatever AWS resources via JSON-based domain specific language. It provides foundational capabilities for the full breadth of AWS, without prescribing a particular model for development and operations. Customers define templates and use them to provision and manage AWS resources, operating systems and application code.

In dissimilarity, AWS OpsWorks is a higher level service that focuses on providing highly productive and reliable DevOps experiences for IT administrators and ops-minded developers. To practice this, AWS OpsWorks employs a configuration management model based on concepts such equally stacks and layers, and provides integrated experiences for key activities like deployment, monitoring, machine-scaling, and automation. Compared to AWS CloudFormation, AWS OpsWorks supports a narrower range of application-oriented AWS resource types including Amazon EC2 instances, Amazon EBS volumes, Elastic IPs, and Amazon CloudWatch metrics.

67. I created a central in Oregon region to encrypt my data in Northward Virginia region for security purposes. I added two users to the key and an external AWS account. I wanted to encrypt an object in S3, and so when I tried, the key that I just created was not listed. What could be the reason?

- External aws accounts are not supported.

- AWS S3 cannot be integrated KMS.

- The Key should be in the same region.

- New keys have some fourth dimension to reflect in the list.

Answer C.

Explanation: The key created and the data to exist encrypted should be in the aforementioned region. Hence the approach taken hither to secure the data is wrong.

68. A company needs to monitor the read and write IOPS for their AWS MySQL RDS instance and send real-time alerts to their operations squad. Which AWS services can achieve this?

- Amazon Simple Electronic mail Service

- Amazon CloudWatch

- Amazon Simple Queue Service

- Amazon Road 53

Answer B.

Explanation: Amazon CloudWatch is a deject monitoring tool and hence this is the right service for the mentioned apply case. The other options listed here are used for other purposes for example route 53 is used for DNS services, therefore CloudWatch will be the apt selection.

69. What happens when one of the resources in a stack cannot be created successfully in AWS OpsWorks?

When an issue like this occurs, the "automated rollback on error" feature is enabled, which causes all the AWS resources which were created successfully till the indicate where the error occurred to be deleted. This is helpful since information technology does non leave backside any erroneous information, it ensures the fact that stacks are either created fully or not created at all. Information technology is useful in events where you may accidentally exceed your limit of the no. of Elastic IP addresses or maybe yous may not take access to an EC2 AMI that you lot are trying to run etc.

seventy. What automation tools can you use to spinup servers?

Whatever of the following tools can be used:

- Roll-your-own scripts, and use the AWS API tools. Such scripts could be written in fustigate, perl or other language of your option.

- Use a configuration management and provisioning tool similar puppet or its successor Opscode Chef. Y'all can also utilise a tool like Scalr.

- Use a managed solution such as Rightscale.

Overwhelmed with all these questions?

We at Edureka are here to assist you with every step on your journey, for condign a AWS Solution Architect, therefore besides this AWS Architect Interview Questions we have come up with a curriculum which covers exactly what you would need to crack the Solution Architect Exam! Yous can have a wait at the course details for AWS preparation in Chennai.

I promise you enjoyed these AWS Interview Questions. The topics that you learnt in this AWS Architect Interview questions weblog are the about sought-later on skill sets that recruiters look for in an AWS Solution Architect Professional. I have tried touching up on AWS interview questions and answers for freshers whereas you lot would likewise find AWS interview questions for people with 3-five years of experience. All the same, for a more detailed study on AWS, you can refer our AWS Tutorial.

Got a question for us? Please mention it in the comments department of this AWS Architect Interview Questions and we volition go dorsum to you.

Related Posts:

Get started with AWS Builder Certification Training

Cloud Computing with AWS

corneliushicithore.blogspot.com

Source: https://www.edureka.co/blog/interview-questions/top-aws-architect-interview-questions-2016/

Post a Comment for "Ec2 Instance Terminated but Starts by Itself Again What May Be the Reason"